The Internet company Google scans the images and emails of its cloud customers for photos and videos of child abuse. The company reports hits from its automatic searches to the local police authorities after checking a common clearing house. What makes sense has already led to allegations against innocent parents in the United States.

Parents had sent images of their sick infants' genitals to paediatricians for consultation. In at least one case, Google blocked a user account and deleted the user's image archives as well as emails from many years. The New York Times reported on the false accusations and their consequences over the weekend.

In an interview with the newspaper, “Mark”, one of the affected Google users who remained anonymous, reported how Google makes all account data, emails and calendars inaccessible, how this means that access to his digital identity and his digital image archives is lost, and what the consequences are for has his everyday life. He also reports that Google has not lifted the ban, even after the local police checked the case and declared it harmless.

The cases raise questions about the extent to which Google protects the privacy of its users - and to what extent the company has the results of its automatic search verified by humans before forwarding them to the police.

The cases also show how dependent users become on the benevolence of the Internet giant when they link all Internet services, logins, their photo archives and their mail to Google's cloud access and user accounts from Gmail, Google Cloud and the group's "Chrome" browser. The more intensively users use Google services, the more vulnerable they are to an unjustified account suspension.

According to research by WELT AM SONNTAG 2021, Google also scans user data in its cloud storage and email services in Germany and automatically searches for child abuse material. It's not just about pictures that are actively sent by email - it is sufficient if the user has activated Google's cloud backup for photos on an Android phone, for example.

Google's expert Claire Lilley had assured WELT AM SONNTAG that all cases will be examined in detail by people using a standardized procedure before they are passed on to the authorities. But this procedure seems to fail, at least in the cases described by the New York Times.

And in Germany, too, Google's investigators are getting a lot of false alarms, according to research by WELT AM SONNTAG in 2021: According to experts from the NRW State Criminal Police Office, the error rate was around 40 percent in the past. "So some algorithm produces a bunch of false accusations - and the taxpayer then has to sort this garbage," commented Patrick Breyer, member of the Pirate Party in the European Parliament, on the contribution of the corporations against the abuse pictures on the Internet.

In the meantime, the debate has a whole new relevance: In the EU, there is currently a discussion about obliging providers to automatically check messages on the Internet. It is not only about the depiction of child abuse, but also about so-called cybergrooming, i.e. attempts by adults to contact minors via chat.

The plans are extremely controversial among the governments of the EU member states - the German government, for example, sent the EU Commission a list of 61 questions, some of which were very critical, to the EU Commission in June.

In the document published by the Netzpolitik platform, the federal government refers to agreements in the coalition agreement of the traffic light coalition, according to which private communication is to be protected and end-to-end encryption is to be maintained as the standard for data protection and cyber security.

The federal government's experts asked, among other things: "How mature are state-of-the-art technologies for avoiding false hits?" and "What proportion of false positive hits can be expected (...)?"

The EU Commission's answers are vague - among other things, the Commission refuses to set a minimum level of maturity for the scanner technology, measured by the error rate. The Commission also finds error rates of up to ten percent of all hits acceptable. How exactly the scanning should work without violating the privacy of the users and breaking the end-to-end encryption is not clear from the answers.

Scanning on the device, which is the only technical solution that would bypass encryption, is rejected by large providers. WhatsApp parent company Meta, for example, wants to protect the rights of users and warns of possible abuse by authoritarian regimes. Apple had only announced the scan on the iPhone and had moved away from it after protests.

"Everything on shares" is the daily stock exchange shot from the WELT business editorial team. Every morning from 7 a.m. with our financial journalists. For stock market experts and beginners. Subscribe to the podcast on Spotify, Apple Podcast, Amazon Music and Deezer. Or directly via RSS feed.

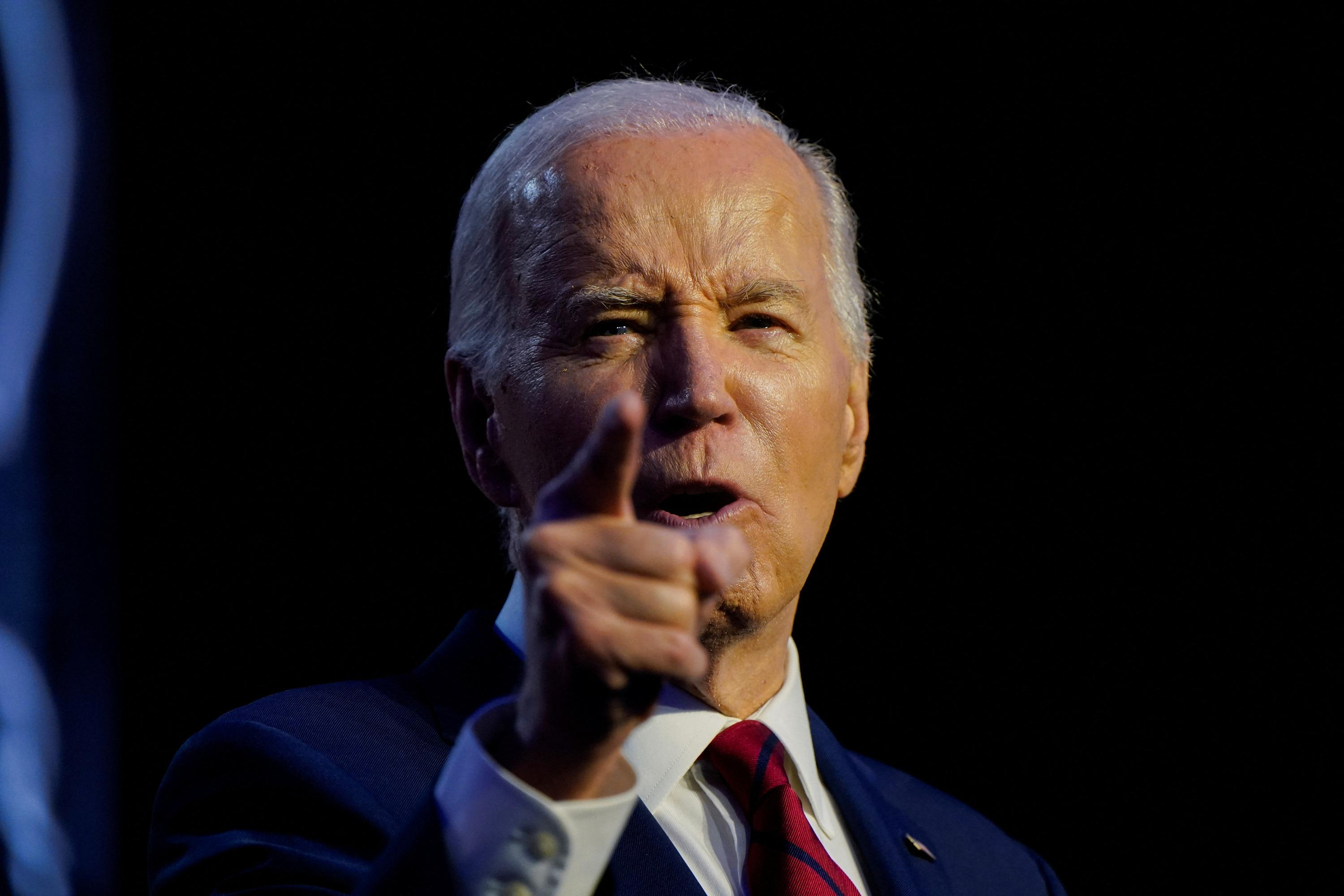

United States: divided on the question of presidential immunity, the Supreme Court offers respite to Trump

United States: divided on the question of presidential immunity, the Supreme Court offers respite to Trump Maurizio Molinari: “the Scurati affair, a European injury”

Maurizio Molinari: “the Scurati affair, a European injury” Hamas-Israel war: US begins construction of pier in Gaza

Hamas-Israel war: US begins construction of pier in Gaza Israel prepares to attack Rafah

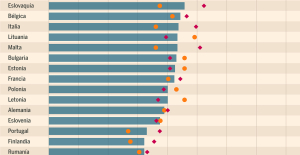

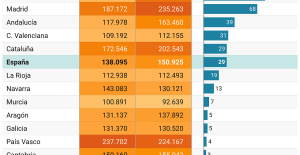

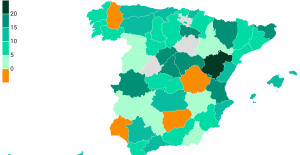

Israel prepares to attack Rafah Spain is the country in the European Union with the most overqualified workers for their jobs

Spain is the country in the European Union with the most overqualified workers for their jobs Parvovirus alert, the “fifth disease” of children which has already caused the death of five babies in 2024

Parvovirus alert, the “fifth disease” of children which has already caused the death of five babies in 2024 Colorectal cancer: what to watch out for in those under 50

Colorectal cancer: what to watch out for in those under 50 H5N1 virus: traces detected in pasteurized milk in the United States

H5N1 virus: traces detected in pasteurized milk in the United States Private clinics announce a strike with “total suspension” of their activities, including emergencies, from June 3 to 5

Private clinics announce a strike with “total suspension” of their activities, including emergencies, from June 3 to 5 The Lagardère group wants to accentuate “synergies” with Vivendi, its new owner

The Lagardère group wants to accentuate “synergies” with Vivendi, its new owner The iconic tennis video game “Top Spin” returns after 13 years of absence

The iconic tennis video game “Top Spin” returns after 13 years of absence Three Stellantis automobile factories shut down due to supplier strike

Three Stellantis automobile factories shut down due to supplier strike A pre-Roman necropolis discovered in Italy during archaeological excavations

A pre-Roman necropolis discovered in Italy during archaeological excavations Searches in Guadeloupe for an investigation into the memorial dedicated to the history of slavery

Searches in Guadeloupe for an investigation into the memorial dedicated to the history of slavery Aya Nakamura in Olympic form a few hours before the Flames ceremony

Aya Nakamura in Olympic form a few hours before the Flames ceremony Psychiatrist Raphaël Gaillard elected to the French Academy

Psychiatrist Raphaël Gaillard elected to the French Academy Skoda Kodiaq 2024: a 'beast' plug-in hybrid SUV

Skoda Kodiaq 2024: a 'beast' plug-in hybrid SUV Tesla launches a new Model Y with 600 km of autonomy at a "more accessible price"

Tesla launches a new Model Y with 600 km of autonomy at a "more accessible price" The 10 best-selling cars in March 2024 in Spain: sales fall due to Easter

The 10 best-selling cars in March 2024 in Spain: sales fall due to Easter A private jet company buys more than 100 flying cars

A private jet company buys more than 100 flying cars This is how housing prices have changed in Spain in the last decade

This is how housing prices have changed in Spain in the last decade The home mortgage firm drops 10% in January and interest soars to 3.46%

The home mortgage firm drops 10% in January and interest soars to 3.46% The jewel of the Rocío de Nagüeles urbanization: a dream villa in Marbella

The jewel of the Rocío de Nagüeles urbanization: a dream villa in Marbella Rental prices grow by 7.3% in February: where does it go up and where does it go down?

Rental prices grow by 7.3% in February: where does it go up and where does it go down? Even on a mission for NATO, the Charles-de-Gaulle remains under French control, Lecornu responds to Mélenchon

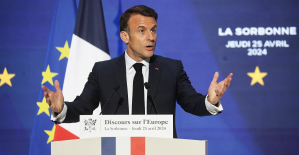

Even on a mission for NATO, the Charles-de-Gaulle remains under French control, Lecornu responds to Mélenchon “Deadly Europe”, “economic decline”, immigration… What to remember from Emmanuel Macron’s speech at the Sorbonne

“Deadly Europe”, “economic decline”, immigration… What to remember from Emmanuel Macron’s speech at the Sorbonne Sale of Biogaran: The Republicans write to Emmanuel Macron

Sale of Biogaran: The Republicans write to Emmanuel Macron Europeans: “All those who claim that we don’t need Europe are liars”, criticizes Bayrou

Europeans: “All those who claim that we don’t need Europe are liars”, criticizes Bayrou These French cities that will boycott the World Cup in Qatar

These French cities that will boycott the World Cup in Qatar Archery: everything you need to know about the sport

Archery: everything you need to know about the sport Handball: “We collapsed”, regrets Nikola Karabatic after PSG-Barcelona

Handball: “We collapsed”, regrets Nikola Karabatic after PSG-Barcelona Tennis: smash, drop shot, slide... Nadal's best points for his return to Madrid (video)

Tennis: smash, drop shot, slide... Nadal's best points for his return to Madrid (video) Pro D2: Biarritz wins a significant success in Agen and takes another step towards maintaining

Pro D2: Biarritz wins a significant success in Agen and takes another step towards maintaining