anyone Who owns a Gmail mailbox, and as a registered user of Youtube open, on the home page of the video portal pretty much Compliant: movie trailers, sports sequences, Clips, the one has already looked or themes that interest you. Entertainment à la carte. Google displays due to individual searches of the recommendations that most closely match the preferences of its users. Although individual Videos from watch history or search queries from the search, you can delete history, but the Mechanics of YouTube's Algorithms, content is cropped to the user, does not shut down – it is the Central design principle of data-driven Advertising machine. You do not need to make once the effort of a Clip to choose the next Video will play automatically. The user can lean back comfortably.

you can Also use social networks such as Twitter or Facebook personalize content. Who created on Facebook a fictitious Dummy Account – male, 53 years old, living in Zurich and the sites of SRF, Greenpeace, and the local biker club likt, sees in his Newsfeed, unsurprisingly, content from the field of environmental protection. It is interesting to note that the Newsfeed algorithm associating content with each other – for example, a contribution of the daily topics about Greenpeace is displayed. The user sees a continually content that interest him, he has already somehow have in view, and is confirmed in his Thinking permanently.

The Internet activist Eli Pariser coined the term filter bubble. This is the behavioristic Trick behind the Like-machinery to manipulate users and conditioned by reward mechanisms so that they could stay for as long as possible in the rod. After all, The more time the users spend on Facebook, the more money the group earns by Ads. The concern is that the filter bubble can lead to a polarization and fragmentation of the political landscape.

Certainly, filter bubbles are not a new phenomenon. Who turns the ultra-conservative US channel Fox News and the hysterical effusions of the moderator on the "caravan crisis" on the us-Mexican border exposes, you must make the trump tone modernist no illusions. That viewers of MSNBC, more moderate positions to the U.S. migration policy is also clear. In this respect, transparency about the political orientation. In the case of social networks, it's different – the Algorithms, the structure information, and select, are a Black Box. Of the Facebook users do not know whether he will now be calculated as a Republican or democratic voters, and his data has been sold to a political campaign. The procedures are completely non-transparent.

Facebook wants to take action against false information and specialists, the network of fake user accounts and Fake News under the cartridges.

Jürgen Habermas wrote in his book "technology and science as "ideology"" of 1968: "today's ruling replacement program applies only to the Functioning of a system. To turn it off, practical issues, and the discussion on the adoption of Standards that would be alone in the democratic process accessible. The solution of technical tasks is not dependent on public discussion." Also Facebook turns questions by compensating problems such as hate speech, hate on the net, computer scientists, and thus the access to the Public drains. And against the opposition immunized.

The question is whether a critical Public, which implies the rules of a changeable rule of a discursive game that can work when in the engine room of private Tech companies an algorithmic Agenda-Setting is programmed. How do you criticize a System in continuous feedback loops always confirmed his own Thinking?

In the echo chambers of the other side not hearing

A critical Public is the ability to take criticism of My perspective, the willingness of his own point of view critically and with other arguments and positions. In the sound-proof echo chambers, the rooms, the social engineers find the arguments of the other side but no hearing. You can otherwise ignore thinking, and mute. Facebook or Youtube are not debating clubs, but advertising platforms, in which the Public obtained as a kind of monitoring of capitalist by-product. Yet that is exactly the Problem: The "media" Ecosystems affects, the rational debates are detrimental to: excitement, emotion, sometimes even of hatred, to reward. A conspiracy video which is clicked millions of times, is also an economic success. The corporations earn so that the axe is applied to the democratic roots. A seemingly irreconcilable conflict. And such a System corrupts, ultimately, the users of which are controlled by such metrics.

The sociologist Zeynep Tufekci has placed in the New York Times, the Thesis that Youtube is "one of the most powerful radicalisation tools of the 21st century. This century." The researcher had observed during the presidential election campaign in 2016 in the United States, that the Portal took place after the Retrieval of Clips of Trump rallies Videos about white racists and Holocaust-deniers suggested and automatically. Programmed Extremism. Also in the case of non-political content, the sociologist observed a radicalization effect. Clips about vegetarians led to Clips about Vegans. The Videos about Jogging to ultra marathons.

in the Midst of a Revolution, the Timeline such as Disneyland

You will feel like you are surfing from one Extreme to the next. Only: How to get out of this spiral? There were in the United States to reflect on whether Tech platforms like Facebook or Google, similar to how cable network operators statutory distribution rules (Must-Carry Rules) imposes, obligate you to specific content feed and to diversify your "program". Theoretically, the legislature could force Facebook to modify its Algorithms so that the contents are balanced, and thus, the sealant of the filter bubble break-up. Practically, this would, however, be difficult to implement: Facebook would then have to accept the view loss and would probably complain about it.

But: Would it change the debate culture, if the convinced Alt-Right-followers in addition to Breitbart News, alibi gets moderately faded in a couple of CNN posts in his Newsfeed? Or this would be the anger at the alleged Political Correctness of new food? In other words: Is the political communication may be so sensitive that a Bursting of the filter bubble more dangers than their maintenance?

Facebook is not only fighting with allegations of filter bubbles, but has to justify itself again and again for the handling of user data.

The US legal scholar Frank Pasquale bubbles in an analysis for the Rosa-Luxemburg-Foundation, called "The automated public space": "The big Problem for the supporters of the Filter-reforms is that they can not demonstrate adequately whether a confrontation of the followers of a page will lead with the facts, the priorities of the ideology and the values of the other side's understanding or rejection, rethinking, or Unruliness." Even in a Situation of "asymmetric convince-ability" would consolidate, ultimately, only the Power of the social group, or political party, "which is the most steadfast to their positions," writes Pasquale.

more Serious than the polarization of the derealisierung effects, associated with filter bubbles appear. Sociologist Tufekci was quoted once that your Newsfeed is feeling in the midst of a Revolution, such as "Disneyland". During the riots in Ferguson in August 2014 she saw on Facebook instead of messages just invitations to the Ice-Bucket Challenge, in which users poured in front of the cameras buckets of ice over their heads. Who is informed only on Facebook, had taken from the riots may not have a note. The shutdown of the Public begins with the suppression of reality. (Süddeutsche Zeitung)

Created: 12.12.2018, 16:47 PM

Knife attack in Australia: who are the two French heroes congratulated by Macron?

Knife attack in Australia: who are the two French heroes congratulated by Macron? Faced with an anxious Chinese student, Olaf Scholz assures that not everyone smokes cannabis in Germany

Faced with an anxious Chinese student, Olaf Scholz assures that not everyone smokes cannabis in Germany In the Solomon Islands, legislative elections crucial for security in the Pacific

In the Solomon Islands, legislative elections crucial for security in the Pacific Sudan ravaged by a year of war

Sudan ravaged by a year of war Covid-19: everything you need to know about the new vaccination campaign which is starting

Covid-19: everything you need to know about the new vaccination campaign which is starting The best laptops of the moment boast artificial intelligence

The best laptops of the moment boast artificial intelligence Amazon invests 700 million in robotizing its warehouses in Europe

Amazon invests 700 million in robotizing its warehouses in Europe Inflation rises to 3.2% in March due to gasoline and electricity bills

Inflation rises to 3.2% in March due to gasoline and electricity bills Olympic Games-2024: which professions are likely to strike during the competition?

Olympic Games-2024: which professions are likely to strike during the competition? Pizzas sold throughout France recalled for “possible presence” of glass debris

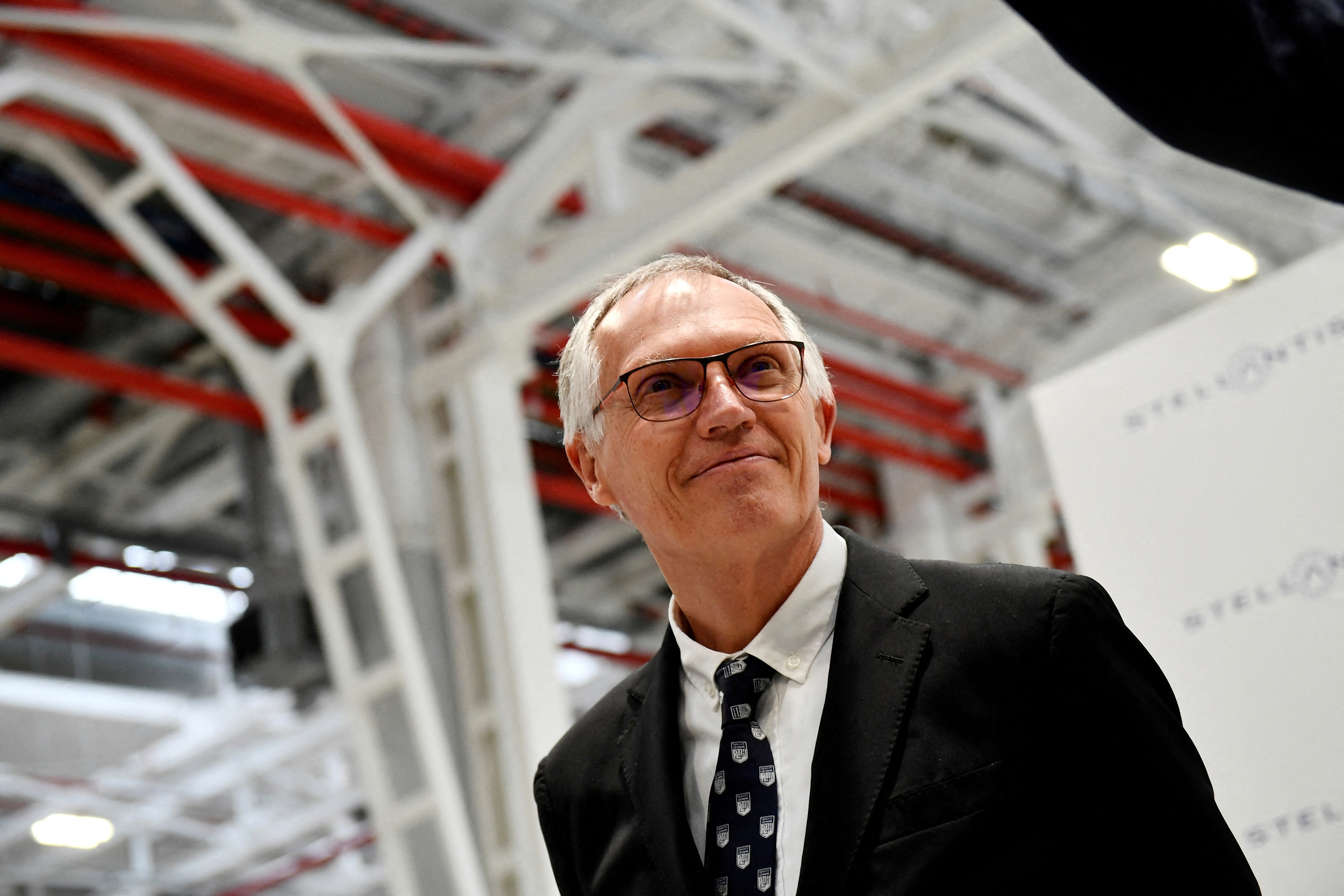

Pizzas sold throughout France recalled for “possible presence” of glass debris “As for a football player, there is a contract”: Carlos Tavares defends his remuneration of 36.5 million euros

“As for a football player, there is a contract”: Carlos Tavares defends his remuneration of 36.5 million euros Stellantis: shareholders validate the controversial remuneration of Carlos Tavares

Stellantis: shareholders validate the controversial remuneration of Carlos Tavares Dune 3 will be the last film of Denis Villeneuve's adaptation

Dune 3 will be the last film of Denis Villeneuve's adaptation Shane Atkinson, humble disciple of the Coen brothers

Shane Atkinson, humble disciple of the Coen brothers Outcry from publishers against the authorization of advertising for books on television

Outcry from publishers against the authorization of advertising for books on television Eddy de Pretto celebrates his “last party too many” at the Olympia

Eddy de Pretto celebrates his “last party too many” at the Olympia Skoda Kodiaq 2024: a 'beast' plug-in hybrid SUV

Skoda Kodiaq 2024: a 'beast' plug-in hybrid SUV Tesla launches a new Model Y with 600 km of autonomy at a "more accessible price"

Tesla launches a new Model Y with 600 km of autonomy at a "more accessible price" The 10 best-selling cars in March 2024 in Spain: sales fall due to Easter

The 10 best-selling cars in March 2024 in Spain: sales fall due to Easter A private jet company buys more than 100 flying cars

A private jet company buys more than 100 flying cars This is how housing prices have changed in Spain in the last decade

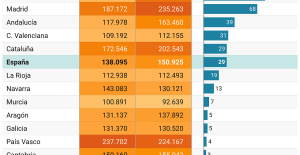

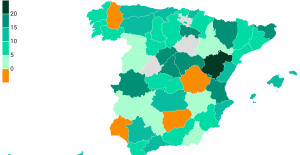

This is how housing prices have changed in Spain in the last decade The home mortgage firm drops 10% in January and interest soars to 3.46%

The home mortgage firm drops 10% in January and interest soars to 3.46% The jewel of the Rocío de Nagüeles urbanization: a dream villa in Marbella

The jewel of the Rocío de Nagüeles urbanization: a dream villa in Marbella Rental prices grow by 7.3% in February: where does it go up and where does it go down?

Rental prices grow by 7.3% in February: where does it go up and where does it go down? Europeans: the schedule of debates to follow between now and June 9

Europeans: the schedule of debates to follow between now and June 9 Europeans: “In France, there is a left and there is a right,” assures Bellamy

Europeans: “In France, there is a left and there is a right,” assures Bellamy During the night of the economy, the right points out the budgetary flaws of the macronie

During the night of the economy, the right points out the budgetary flaws of the macronie Europeans: Glucksmann denounces “Emmanuel Macron’s failure” in the face of Bardella’s success

Europeans: Glucksmann denounces “Emmanuel Macron’s failure” in the face of Bardella’s success These French cities that will boycott the World Cup in Qatar

These French cities that will boycott the World Cup in Qatar Dortmund-Atlético: two months before the Euro, Griezmann warms up the engine

Dortmund-Atlético: two months before the Euro, Griezmann warms up the engine Football: Bernd Hölzenbein, 1974 world champion, died at 78

Football: Bernd Hölzenbein, 1974 world champion, died at 78 'Everything comes to an end': Surfing legend Kelly Slater moves closer to retirement

'Everything comes to an end': Surfing legend Kelly Slater moves closer to retirement Athletics: the victory of a transgender athlete causes controversy in the United States

Athletics: the victory of a transgender athlete causes controversy in the United States