There are many myths surrounding artificial intelligence (AI). Horror stories reminiscent of Hollywood blockbusters spread rapidly, are rarely questioned and often obscure a factual assessment. The public is a grateful buyer – you just like to be scared.

Blake Lemoine, a former Google researcher and a colorful figure, recently put forward the provocative thesis that the new Google AI LaMDA had developed consciousness. However, he did not provide any evidence for this statement. Lemoine only published selected and edited conversations with LaMDA, in which the AI explains, among other things, to have written a touching fairy tale about an owl who advocates for animal rights.

Lemoine called for an attorney to represent LaMDA because the algorithm has a personality and, as if all of that weren't enough, seriously warned that LaMDA could flee and do evil. That wasn't enough to shake his credibility everywhere. Many media outlets see him as a hero who took on Google and who was fired because of that, and not because of his absurd claims.

In order to be able to seriously assess the opportunities and risks of AI, the way it works should be understood in principle. The algorithms used today mostly come from machine learning. Even this term contributes to demystification: machine learning means – put simply – that algorithms learn on the basis of data.

A catchy example is recognizing animals in photos after the algorithms have been previously trained on thousands of animal photos. So the algorithms learn in a similar way to children, except that children need far fewer examples to distinguish a dog from a cat.

If the algorithms are trained with large amounts of data, they can achieve excellent results in precisely defined fields of application - but only there. So these algorithms are “idiots”: no language assistant would ever think of playing chess; no algorithm for cancer diagnosis wanted or could drive autonomously.

It is against this background that Lemoine's claims can be classified. Like other AI language models, Google LaMDA is based on huge amounts of data and was trained with many dialogs. This allows the AI to write in a similar way to humans. However, LaMDA has no consciousness and, like a parrot, only parrots what it has been trained to do.

Coming to an assessment of AI: AI can help make better decisions. In defined areas of application such as recognizing objects and images, AI now performs better than humans. This can be used, for example, in the diagnosis of diseases.

In studies at the TU Darmstadt, we have shown that the quality of decisions is particularly good when AI and people with different skills work together in a team. AI can also save time and reduce costs. Examples are language assistants or automated processes in industry.

However, AI does not come for free. It is usually cheaper for companies to use external AI services, which are offered by companies such as Google, Microsoft, IBM or Amazon, for example, than developing them in-house. In addition, there are ongoing costs for using cloud infrastructures. Up to this point, there aren't that many differences between evaluating AI and other digital solutions.

However, when using AI, we have to consider two risks in particular. On the one hand, many AI algorithms are not transparent, so they often cannot explain why they come to certain results. This may not matter in use cases like quality control.

But when we think about diagnosing diseases, such a lack of transparency is not accepted. Many computer scientists around the world are researching the "transparency of AI", and there is reasonable hope that AI will be able to better explain its results in the future.

On the other hand, it must be taken into account that the use of AI – similar to that of humans – can lead to discriminatory or unfair results. For example, Amazon has used an AI for personnel selection. After some time it became clear that the algorithm only proposes men and obviously discriminates against women.

However, this was not due to the algorithm, but to the training data. If the algorithms are trained primarily with data from very well educated men and less well educated women, they may recognize a pattern that does not exist at all. Likewise, a Google facial recognition algorithm could only recognize people with white skin.

Again, this was not due to the algorithm used, but to the training data, in which people of all skin colors were not represented. In both cases, then, the discrimination was “man-made”; the training data did not reflect reality.

In addition, further ethical questions are discussed. The “classic” is the question of whether an autonomously driving car that can no longer avoid an accident should run over a child or an elderly person. Such questions can be endlessly debated. Although an ethics committee headed by the former federal constitutional judge Udo Di Fabio worked out the sensible and pragmatic recommendation on behalf of the federal government in 2017, according to which all people are to be treated equally, the discussion continues.

Readers can ask themselves how often they, as drivers or cyclists, have faced the decision to run over either an old or a young person. From a practical point of view, I can hardly imagine a less relevant question. Relevant questions, on the other hand, tend to focus more on the quality and safety of autonomous driving.

Against the background of the growing importance of algorithms for society and the economy, it would make sense to improve the level of knowledge of people and especially decision-makers. After all, good decisions are usually based on information and knowledge.

One step could be the integration of basic skills in school education and in courses of study in different subjects. An improved level of knowledge would be a basis for a factual and non-emotional debate about AI - without fear and without hype.

Politicians too often focus on the possible downsides of AI, as the European Union’s “AI Act” currently shows with its risk-based approach. AI offers an incredible number of opportunities, for example in the diagnosis and therapy of diseases, the development of vaccines or the defense against cyber attacks.

Of course, we also have to consider the ethical issues discussed above, but we should ask the right questions and not get bogged down in debates about non-existent problems or unrealistic dystopias. According to leading Stanford researcher Erik Brynjolfsson, AI or machine learning are the most important key technologies of the 21st century.

In this respect, the discussion about AI is also about what role German or European companies will play in the future compared to American, Chinese or Israeli providers. An objective debate and quick action would be the right step in order to be able to use the opportunities of AI for society and the economy and not to fall further behind internationally in the field of digitization and on the future market AI.

With 35,000 euros, the German AI Prize awarded by WELT is one of the most valuable and important awards of its kind in Europe. It is awarded in the categories "Innovation" and "Application". This year's winners are Wolfram Burgard, Professor of AI and Robotics and Founding Chairman of the Department of Engineering at the TU Nuremberg, and the Berlin company Ada, which has developed a world-leading clinical AI and enables its use via an app.

In addition, a special prize will be awarded this year for "AI start-ups in the circular economy". The following qualified for the pitch final: PlanerAI, whose AI platform helps food manufacturers to avoid waste, WasteAnt, which offers systems for waste separation and recycling, and Twaice, which developed battery analysis software for electric cars.

"Everything on shares" is the daily stock exchange shot from the WELT business editorial team. Every morning from 7 a.m. with our financial journalists. For stock market experts and beginners. Subscribe to the podcast on Spotify, Apple Podcast, Amazon Music and Deezer. Or directly via RSS feed.

Germany: Man armed with machete enters university library and threatens staff

Germany: Man armed with machete enters university library and threatens staff His body naturally produces alcohol, he is acquitted after a drunk driving conviction

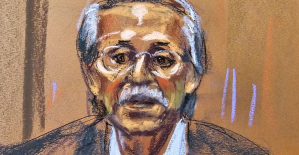

His body naturally produces alcohol, he is acquitted after a drunk driving conviction Who is David Pecker, the first key witness in Donald Trump's trial?

Who is David Pecker, the first key witness in Donald Trump's trial? What does the law on the expulsion of migrants to Rwanda adopted by the British Parliament contain?

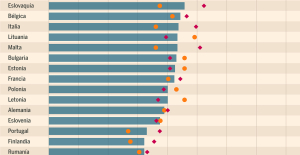

What does the law on the expulsion of migrants to Rwanda adopted by the British Parliament contain? Spain is the country in the European Union with the most overqualified workers for their jobs

Spain is the country in the European Union with the most overqualified workers for their jobs Parvovirus alert, the “fifth disease” of children which has already caused the death of five babies in 2024

Parvovirus alert, the “fifth disease” of children which has already caused the death of five babies in 2024 Colorectal cancer: what to watch out for in those under 50

Colorectal cancer: what to watch out for in those under 50 H5N1 virus: traces detected in pasteurized milk in the United States

H5N1 virus: traces detected in pasteurized milk in the United States Insurance: SFAM, subsidiary of Indexia, placed in compulsory liquidation

Insurance: SFAM, subsidiary of Indexia, placed in compulsory liquidation Under pressure from Brussels, TikTok deactivates the controversial mechanisms of its TikTok Lite application

Under pressure from Brussels, TikTok deactivates the controversial mechanisms of its TikTok Lite application “I can’t help but panic”: these passengers worried about incidents on Boeing

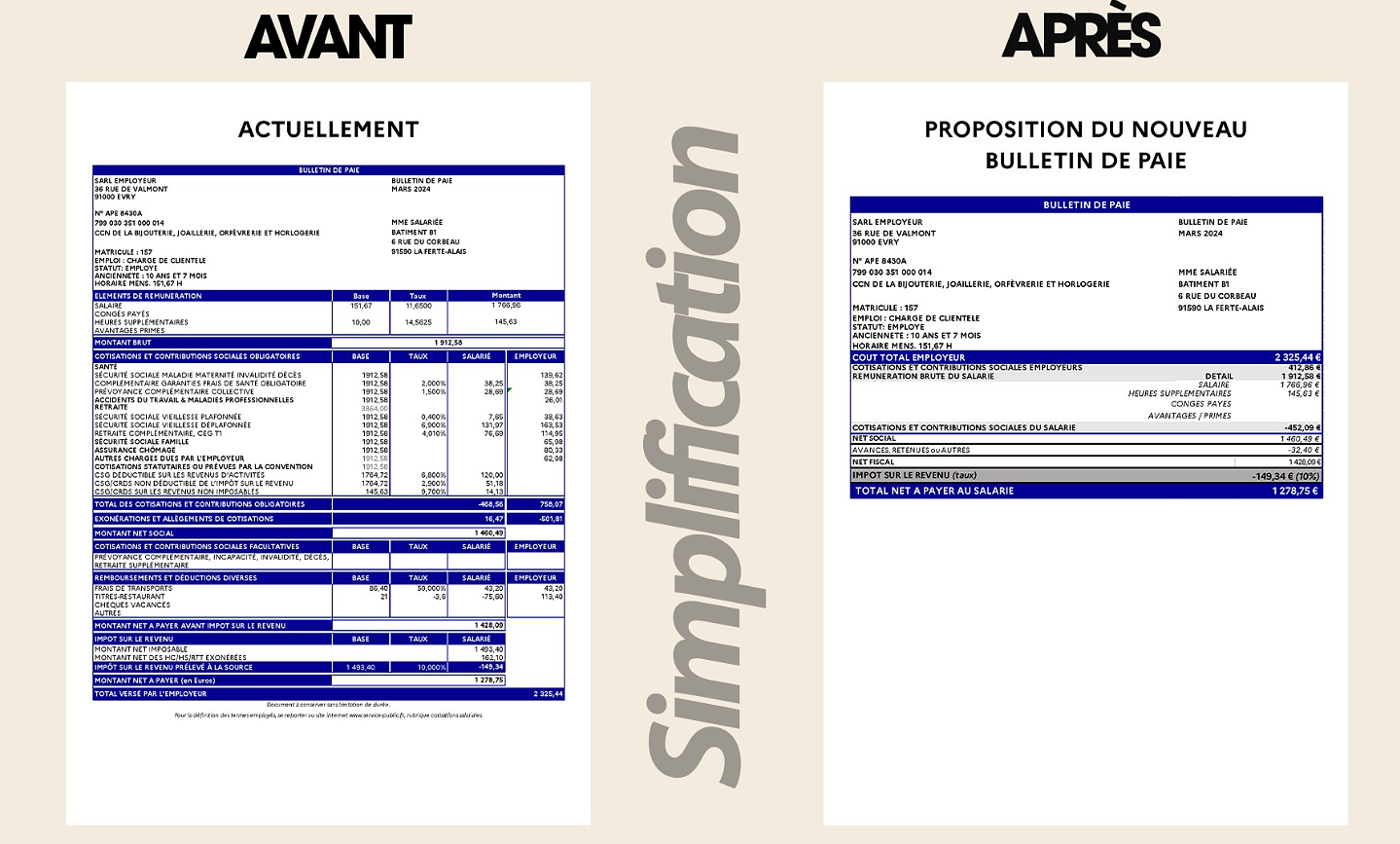

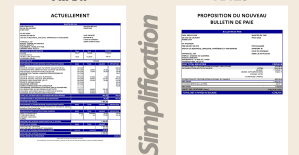

“I can’t help but panic”: these passengers worried about incidents on Boeing “I’m interested in knowing where the money that the State takes from me goes”: Bruno Le Maire’s strange pay slip sparks controversy

“I’m interested in knowing where the money that the State takes from me goes”: Bruno Le Maire’s strange pay slip sparks controversy 25 years later, the actors of Blair Witch Project are still demanding money to match the film's record profits

25 years later, the actors of Blair Witch Project are still demanding money to match the film's record profits At La Scala, Mathilde Charbonneaux is Madame M., Jacqueline Maillan

At La Scala, Mathilde Charbonneaux is Madame M., Jacqueline Maillan Deprived of Hollywood and Western music, Russia gives in to the charms of K-pop and manga

Deprived of Hollywood and Western music, Russia gives in to the charms of K-pop and manga Exhibition: Toni Grand, the incredible odyssey of a sculptural thinker

Exhibition: Toni Grand, the incredible odyssey of a sculptural thinker Skoda Kodiaq 2024: a 'beast' plug-in hybrid SUV

Skoda Kodiaq 2024: a 'beast' plug-in hybrid SUV Tesla launches a new Model Y with 600 km of autonomy at a "more accessible price"

Tesla launches a new Model Y with 600 km of autonomy at a "more accessible price" The 10 best-selling cars in March 2024 in Spain: sales fall due to Easter

The 10 best-selling cars in March 2024 in Spain: sales fall due to Easter A private jet company buys more than 100 flying cars

A private jet company buys more than 100 flying cars This is how housing prices have changed in Spain in the last decade

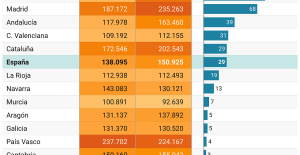

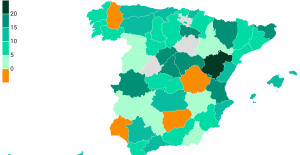

This is how housing prices have changed in Spain in the last decade The home mortgage firm drops 10% in January and interest soars to 3.46%

The home mortgage firm drops 10% in January and interest soars to 3.46% The jewel of the Rocío de Nagüeles urbanization: a dream villa in Marbella

The jewel of the Rocío de Nagüeles urbanization: a dream villa in Marbella Rental prices grow by 7.3% in February: where does it go up and where does it go down?

Rental prices grow by 7.3% in February: where does it go up and where does it go down? Sale of Biogaran: The Republicans write to Emmanuel Macron

Sale of Biogaran: The Republicans write to Emmanuel Macron Europeans: “All those who claim that we don’t need Europe are liars”, criticizes Bayrou

Europeans: “All those who claim that we don’t need Europe are liars”, criticizes Bayrou With the promise of a “real burst of authority”, Gabriel Attal provokes the ire of the opposition

With the promise of a “real burst of authority”, Gabriel Attal provokes the ire of the opposition Europeans: the schedule of debates to follow between now and June 9

Europeans: the schedule of debates to follow between now and June 9 These French cities that will boycott the World Cup in Qatar

These French cities that will boycott the World Cup in Qatar Hand: Montpellier crushes Kiel and continues to dream of the Champions League

Hand: Montpellier crushes Kiel and continues to dream of the Champions League OM-Nice: a spectacular derby, Niçois timid despite their numerical superiority...The tops and the flops

OM-Nice: a spectacular derby, Niçois timid despite their numerical superiority...The tops and the flops Tennis: 1000 matches and 10 notable encounters by Richard Gasquet

Tennis: 1000 matches and 10 notable encounters by Richard Gasquet Tennis: first victory of the season on clay for Osaka in Madrid

Tennis: first victory of the season on clay for Osaka in Madrid