"Dear people of Belgium. This is great. As you know, I have had the balls to pull the united STATES out of the Parisavtalen. It should also ye do.", says Trump in a video that appeared on social media in may of last year.

the Video shows a picture of Donald Trump, but the person in the video, which is produced by the belgian political party Socialistische Partij Anders (Sp.a), is not the u.s. president, writes The Economist.

- A stolid spectator can let himself be deceived, but on the Trump clip you can see that the lip movements and the audio don't belong together, " says Torgeir Waterhouse, director Internet and new media in ICT, Norway, Dagbladet.

AI: The chinese news agency Xinhua has hired two new reporters to the staff: two robots, which should read out the news to the chinese people. Video: Xinhua News Agency. Editing: Ørjan Ryland View moreHave you not noticed that the video is fake at first glance, one gets however an investigation from Trump-imitasjonen at the end of the clip:

"We all know that climate change is imaginary. Just like this video".

Easy to falsify video footageThat the images can easily be manipulated is known for most. Less well known is that the videos are now on the way to becoming just as vulnerable to manipulation.

so-Called "Deepfake"-videos can be created with images that, for example, be retrieved from social media.

- It is about making fake films by the use of available images, computing power and technologies that can manipulate and easy to forge videos. It is the video as we believe that it is true, " says Waterhouse.

the Fear of political attacks in Norway Will Sissell Kyrkjebø be your friend? In the worst case, you can be scammed out of moneyWhile the Trump-the video is a little realistic, however, shows a stoner warning from Barack Obama how realistic the manipulation of video footage can be.

In the video, which was created by Buzzfeed in the last year, the call including former U.s. president Donald Trump for a "bastard". In reality, it was the movie director Jordan Peel that mimicked the the former president.

Waterhouse does not rule out that this type of videomanipulasjon can be used to politically targeted attacks in Norway.

- I fear that individuals or groups may attack parties and democracy by using it against certain politicians or parties. I don't think the serious parties will do it, but it does not rule out that some can, " he says.

According to Waterhouse, it is not difficult to weaken a political opponent, if you really want to.

CHALLENGE: Internettfenomenet "Momo" takes off on social media. Here you will see why. NOTE: We warn that the video contains images that may be frightening for children and young people. Video: screenshots from Instagram and YouTube / Emilie Rydning / Dagbladet Read the full story here: https://www.dagbladet.no/nyheter/advarer-foreldre-mot-nytt-internettfenomen---ikke... Show moreYou can imagine a power struggle within a party. It is not something difficult to defame a political adversary by cutting the audio from a private conversation or video that is filmed in the hidden. You can just look at the debate about abortloven here in Norway recently. Who said what and when was incredibly important. With movie and sound clips in it hidden in the context, it could have been easy to manipulate the debate, " he says.

Us electionTor Henning Ueland, senior in Very mean Norway is in no way spared of the phenomenon.

- Everything that can be used as a weapon, will be used as it, including the so-called Deepfake videos. Even though I am not aware that such videos have been used yet, it is not a question if, but when such videos will be used, " he says.

Ueland points out that the false news, conspiracy theories and påvirkningskampanjer is in the wind like never before. He does not rule out that we may get to see this up against and during the american election campaign in 2020.

- It can come from any of the candidates 'supporters, or from external parties who want to influence the outcome in one direction or the other," he says.

95 people arrested in major crackdown on credit card fraud Risk for extortionAccording to the Washington Post also represents these videos now a new way to harass women.

Images of women's faces retrieved from the social media and manipulated on the bodies of porn actors.

Waterhouse is not aware of cases where Deepfake-videos have been used to malign people in Norway, but:

- It is important to look at what can happen about 10-15 years. This represents a significant risk for abuse, for example, by manipulating people into porn movies in order to harass or to extort money. It is a bit like imaging. From having to use a lot of computing power for image manipulation, one can now do this with the smartphone, " says Waterhouse.

Norwegian milliardærarving abused on Facebook: - We are aware of it

His body naturally produces alcohol, he is acquitted after a drunk driving conviction

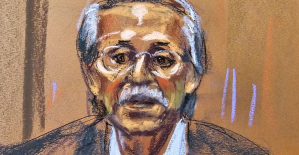

His body naturally produces alcohol, he is acquitted after a drunk driving conviction Who is David Pecker, the first key witness in Donald Trump's trial?

Who is David Pecker, the first key witness in Donald Trump's trial? What does the law on the expulsion of migrants to Rwanda adopted by the British Parliament contain?

What does the law on the expulsion of migrants to Rwanda adopted by the British Parliament contain? The shadow of Chinese espionage hangs over Westminster

The shadow of Chinese espionage hangs over Westminster Colorectal cancer: what to watch out for in those under 50

Colorectal cancer: what to watch out for in those under 50 H5N1 virus: traces detected in pasteurized milk in the United States

H5N1 virus: traces detected in pasteurized milk in the United States What High Blood Pressure Does to Your Body (And Why It Should Be Treated)

What High Blood Pressure Does to Your Body (And Why It Should Be Treated) Vaccination in France has progressed in 2023, rejoices Public Health France

Vaccination in France has progressed in 2023, rejoices Public Health France The right deplores a “dismal agreement” on the end of careers at the SNCF

The right deplores a “dismal agreement” on the end of careers at the SNCF The United States pushes TikTok towards the exit

The United States pushes TikTok towards the exit Air traffic controllers strike: 75% of flights canceled at Orly on Thursday, 65% at Roissy and Marseille

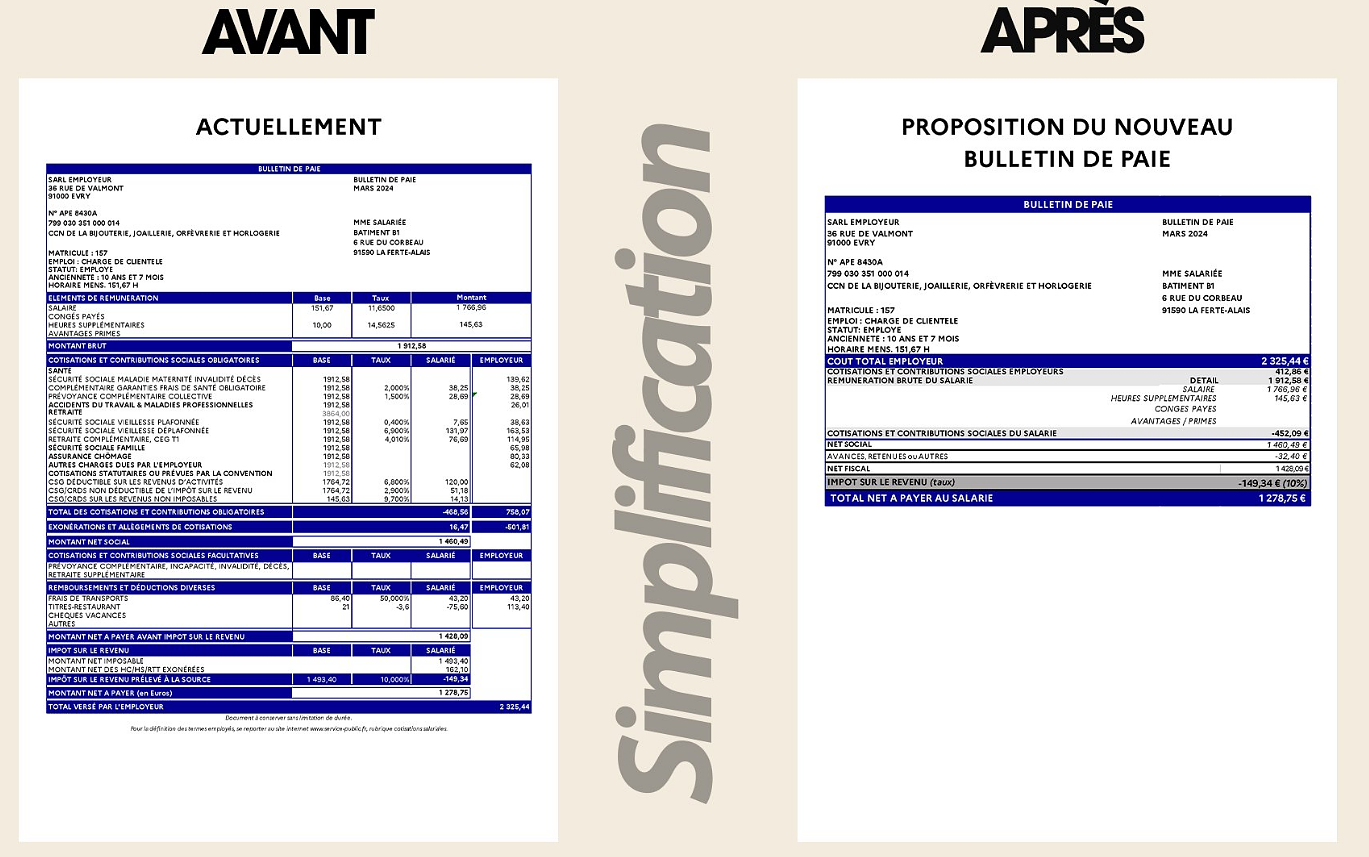

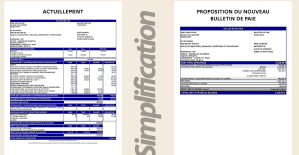

Air traffic controllers strike: 75% of flights canceled at Orly on Thursday, 65% at Roissy and Marseille This is what your pay slip could look like tomorrow according to Bruno Le Maire

This is what your pay slip could look like tomorrow according to Bruno Le Maire Sky Dome 2123, Challengers, Back to Black... Films to watch or avoid this week

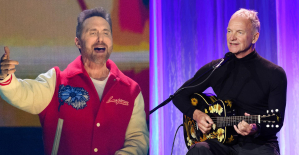

Sky Dome 2123, Challengers, Back to Black... Films to watch or avoid this week The standoff between the organizers of Vieilles Charrues and the elected officials of Carhaix threatens the festival

The standoff between the organizers of Vieilles Charrues and the elected officials of Carhaix threatens the festival Strasbourg inaugurates a year of celebrations and debates as World Book Capital

Strasbourg inaugurates a year of celebrations and debates as World Book Capital Kendji Girac is “out of the woods” after his gunshot wound to the chest

Kendji Girac is “out of the woods” after his gunshot wound to the chest Skoda Kodiaq 2024: a 'beast' plug-in hybrid SUV

Skoda Kodiaq 2024: a 'beast' plug-in hybrid SUV Tesla launches a new Model Y with 600 km of autonomy at a "more accessible price"

Tesla launches a new Model Y with 600 km of autonomy at a "more accessible price" The 10 best-selling cars in March 2024 in Spain: sales fall due to Easter

The 10 best-selling cars in March 2024 in Spain: sales fall due to Easter A private jet company buys more than 100 flying cars

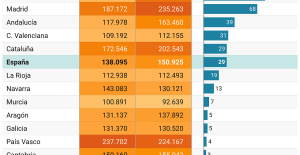

A private jet company buys more than 100 flying cars This is how housing prices have changed in Spain in the last decade

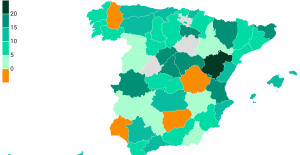

This is how housing prices have changed in Spain in the last decade The home mortgage firm drops 10% in January and interest soars to 3.46%

The home mortgage firm drops 10% in January and interest soars to 3.46% The jewel of the Rocío de Nagüeles urbanization: a dream villa in Marbella

The jewel of the Rocío de Nagüeles urbanization: a dream villa in Marbella Rental prices grow by 7.3% in February: where does it go up and where does it go down?

Rental prices grow by 7.3% in February: where does it go up and where does it go down? Europeans: “All those who claim that we don’t need Europe are liars”, criticizes Bayrou

Europeans: “All those who claim that we don’t need Europe are liars”, criticizes Bayrou With the promise of a “real burst of authority”, Gabriel Attal provokes the ire of the opposition

With the promise of a “real burst of authority”, Gabriel Attal provokes the ire of the opposition Europeans: the schedule of debates to follow between now and June 9

Europeans: the schedule of debates to follow between now and June 9 Europeans: “In France, there is a left and there is a right,” assures Bellamy

Europeans: “In France, there is a left and there is a right,” assures Bellamy These French cities that will boycott the World Cup in Qatar

These French cities that will boycott the World Cup in Qatar NBA: the Wolves escape against the Suns, Indiana unfolds and the Clippers defeated

NBA: the Wolves escape against the Suns, Indiana unfolds and the Clippers defeated Real Madrid: what position will Mbappé play? The answer is known

Real Madrid: what position will Mbappé play? The answer is known Cycling: Quintana will appear at the Giro

Cycling: Quintana will appear at the Giro Premier League: “The team has given up”, notes Mauricio Pochettino after Arsenal’s card

Premier League: “The team has given up”, notes Mauricio Pochettino after Arsenal’s card